Image/Video GenAI: Tools & Workflows

Modern video diffusion models excel at appearance synthesis but still struggle with physical consistency: objects drift, collisions lack realistic rebound, and material responses seldom match their underlying properties. (PhyCo: Learning Controllable Physical Priors for Generative Motion) Diffusion large language models (dLLMs) offer parallel decoding and bidirectional context, but state-of-the-art dLLMs require billions of parameters for competitive performance. (Turning the TIDE: Cross-Architecture Distillation for Diffusion Large Language Models) Humanoid control systems have made...

This matters because visual generation is shifting from novelty outputs toward controllable production workflows. The multi-source signal suggests builders should watch tools that improve editing precision, repeatability, and model integration.

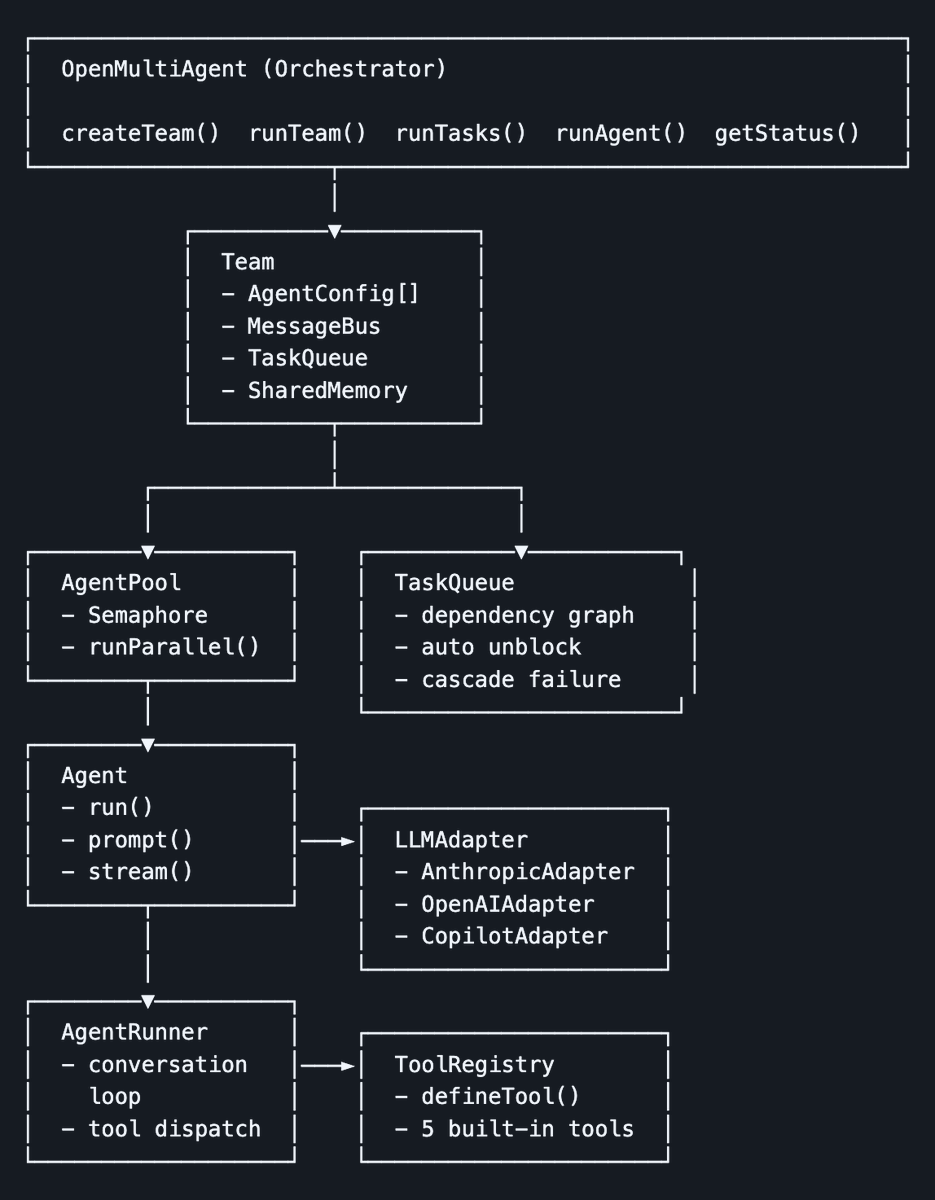

AI Agents: Applications & Builds

We present GLM-5V-Turbo, a step toward native foundation models for multimodal agents. (GLM-5V-Turbo: Toward a Native Foundation Model for Multimodal Agents) We introduce Nemotron 3 Nano Omni, the latest model in the Nemotron multimodal series and the first to natively support audio inputs alongside text, images, and video. (Nemotron 3 Nano Omni: Efficient and Open Multimodal Intelligence) Recent visual generation models have made major progress in photorealism, typography, instruction following, and interactive editing, yet they still struggle with spatial reasoning, persistent state...

This matters because practical agent use cases are widening, but the durable signal is whether they solve repeated workflows instead of one-off demos. The multi-source signal is worth tracking for changes in capability, deployment friction, or operating risk.

Generative 3D Worlds: Explorable World Models

We propose X-WAM, a Unified 4D World Model that unifies real-time robotic action execution and high-fidelity 4D world synthesis (video + 3D reconstruction) in a single framework, addressing the critical limitations of p…. (Unified 4D World Action Modeling from Video Priors with Asynchronous Denoising)

This matters because world-generation systems are moving from rendered clips toward persistent spaces that can be explored, edited, and exported into production tools. The single-source signal is worth tracking for signs that 3D asset creation and simulation workflows are becoming model-driven.

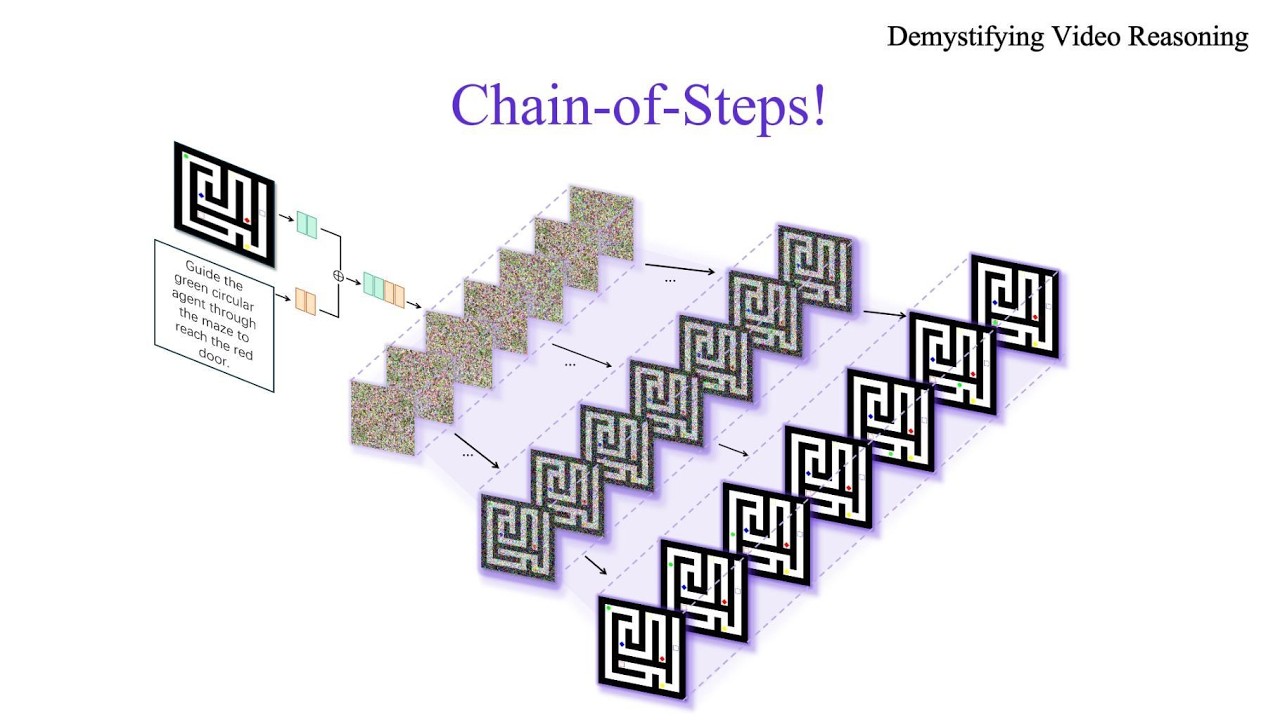

AI Agents: Research & Evals

Autonomous scientific research is significantly advanced thanks to the development of AI agents. (AutoResearchBench: Benchmarking AI Agents on Complex Scientific Literature Discovery) Scientific publication compresses a branching, iterative research process into a linear narrative, discarding the majority of what was discovered along the way. (The Last Human-Written Paper: Agent-Native Research Artifacts) Long-context large language models (LLMs)-for example, Gemini-3.1-Pro and Qwen-3.5-are widely used to empower many real-world applications, such as retrieval-augmented generation, autonomous...

This matters because evaluation work is becoming the control surface for agent progress: better tests shape what builders trust, deploy, and regulate. The multi-source signal is worth tracking for changes in capability, deployment friction, or operating risk.

Also Notable

Heterogeneous Scientific Foundation Model Collaboration

Agentic large language model systems have demonstrated strong capabilities. However, their reliance on language as the universal interface fundamentally limits their applicability to many real-world problems, especially in scientific domains where domain-specific foundation models have been developed to address specialized tasks beyond natural language. In this work, we introduce Eywa, a heterogeneous agentic...

FAMA: Failure-Aware Meta-Agentic Framework for Open-Source LLMs in Interactive Tool Use Environments

Large Language Models are being increasingly deployed as the decision-making core of autonomous agents capable of effecting change in external environments. Yet, in conversational benchmarks, which simulate real-world customer-centric issue resolution scenarios, these agents frequently fail due to the cascading effects of incorrect decision-making. These challenges are particularly pronounced for open-source LLMs...

Agentic Fusion of Large Atomic and Language Models to Accelerate Superconductors Discovery

The discovery of novel materials is critical for global energy and quantum technology transitions. While deep learning has fundamentally reshaped this landscape, existing predictive or generative models typically operate in isolation, lacking the autonomous orchestration required to execute the full discovery process. Here we present ElementsClaw, an agentic framework for materials discovery that synergizes Large...

Efficient Training on Multiple Consumer GPUs with RoundPipe

Fine-tuning Large Language Models (LLMs) on consumer-grade GPUs is highly cost-effective, yet constrained by limited GPU memory and slow PCIe interconnects. Pipeline parallelism combined with CPU offloading mitigates these hardware bottlenecks by reducing communication overhead. However, existing PP schedules suffer from an inherent limitation termed the weight binding issue. Binding uneven model stages (e.g., the...